In this tutorial, we will walk you through the setup of your deep learning environment using Google Cloud Platform (GCP). Please follow the steps carefully, and do not hesitate to ask for help once you run into problems.

Important note: this setup is part of Assignment 0.

Contents

- Step 1: Log into Google Cloud Account

- Step 2: Create your project and GCE instance

- Step 3: Connect to Your Instance

- Step 4: Check the tools

- Step 5: Jupyter Notebook

- *Step 6: Other useful tools in GCP

Step 1: Log into Google Cloud Account

-

Go to Google Cloud Console (https://cloud.google.com/) and sign in with your LionMail account (yourUNI@columbia.edu).

. If you sign up with some other account, you will not be able to use google coupons provided by instructors.

-

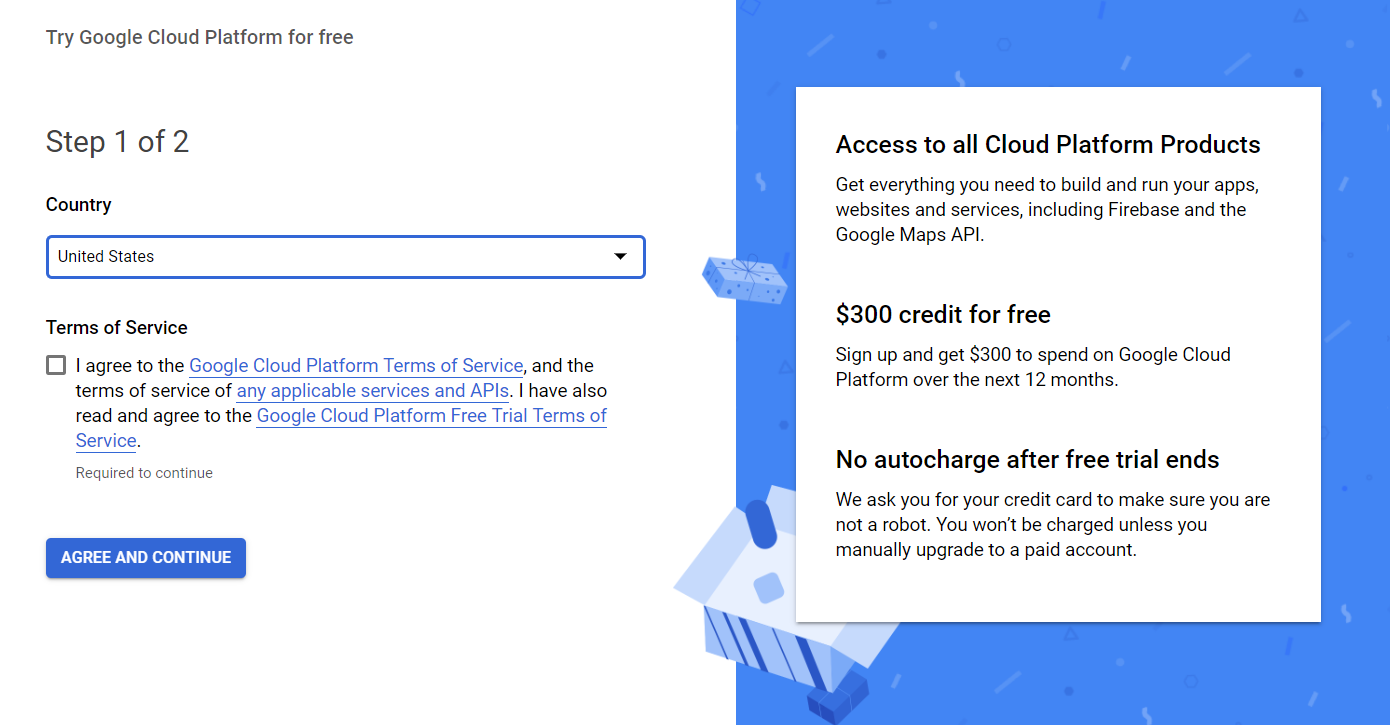

If you are a new user of Google cloud, you can get $300 credits for free by clicking 'Get started for free'. You can explore the GCP for a while with the free credits as you like. After the add/drop period, students will get educational coupons from instructors to cover course-related google cloud expenses.

-

Redeem your educational google cloud coupons (Google cloud coupon distribution method TBD). Using GPU charges are approximately $1/hour - so please manage your computational resources wisely. A good way to do this is to create a deep learning environment on your local computer and debug your code there, and only finally run it in the Google Cloud when more powerful computational resources are needed. Note that some assignments can be executed even on non-GPU personal computers.

If you have received the coupon code, go to https://console.cloud.google.com/education, select your LionMail account on the top right , and redeem the coupon.

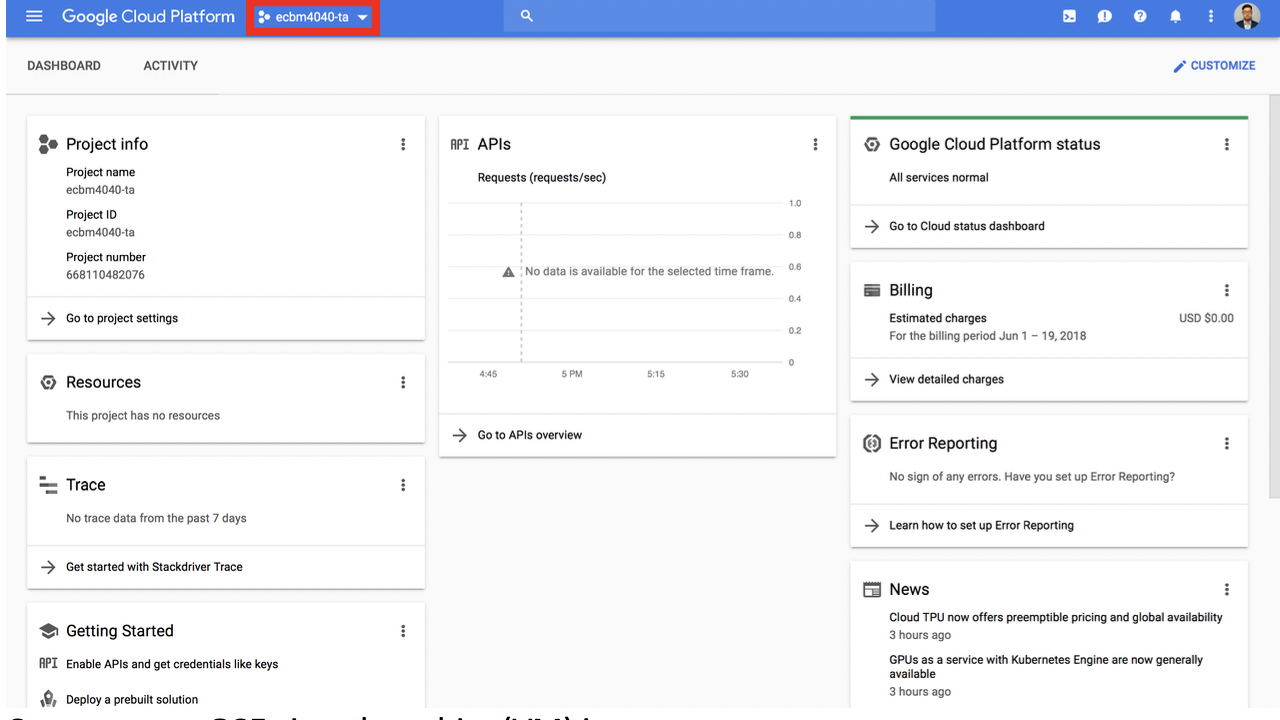

Now, you can visit your Google Cloud dashboard.

Step 2: Create your project and Google Compute Engine(GCE) instance

-

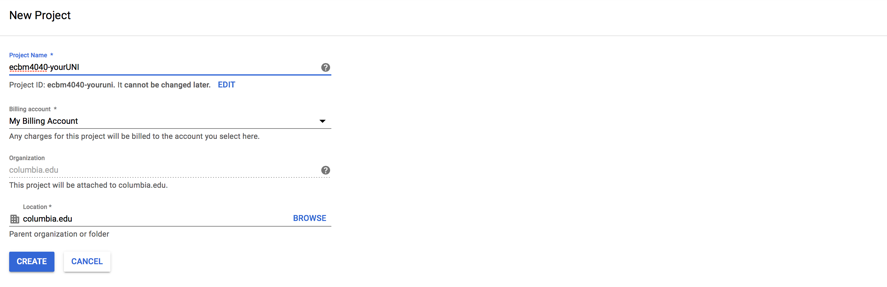

Create your project, click 'Select a project' -> 'NEW PROJECT'. For administrative reasons, we request that you use 'ecbm4040-yourUNI' as your project name. Choose the correct billing account, the one from the coupon. After a few seconds, you should be able to see your newly created project’s homepage.

-

Edit your GPU quota.

Make sure to select the project you just created, 'ecbm4040-yourUNI'. If you are using the project/Google cloud for the first time, you may not immediately be able to change the quota - you may have to first go through the step "Create a new GCE virtual machine(VM) instance".

- Go to ‘IAM & Admin’ -> ‘Quotas’.

From December 2018, new users have to request for quotas when starting a project.

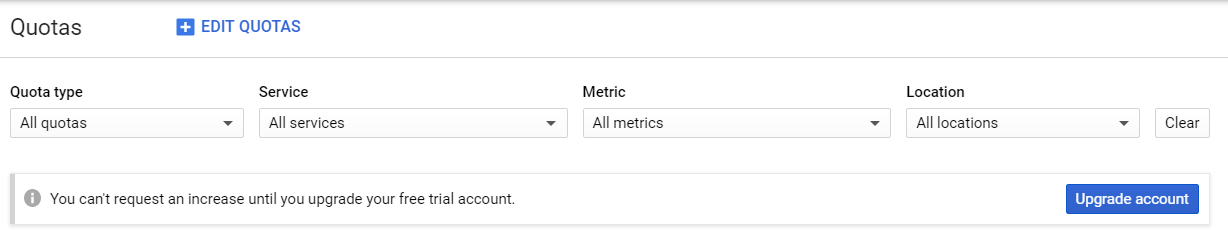

If you haven't upgrade your free trial ($300) account, you will see this warning.

Click on the item 'Upgrade account'. Then you can request for increasing quotas.

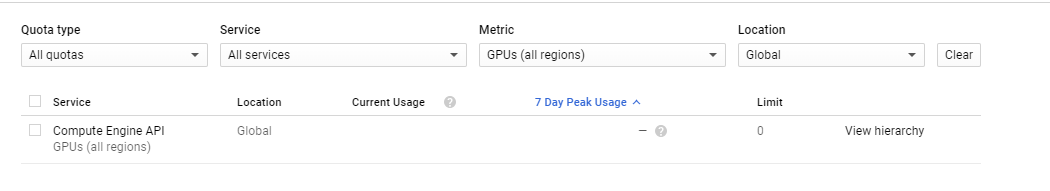

- In filter type, choose Quota type as 'All quotas'.

Then select Metric to be 'GPUs (all regions)' and

Location as 'Global'.

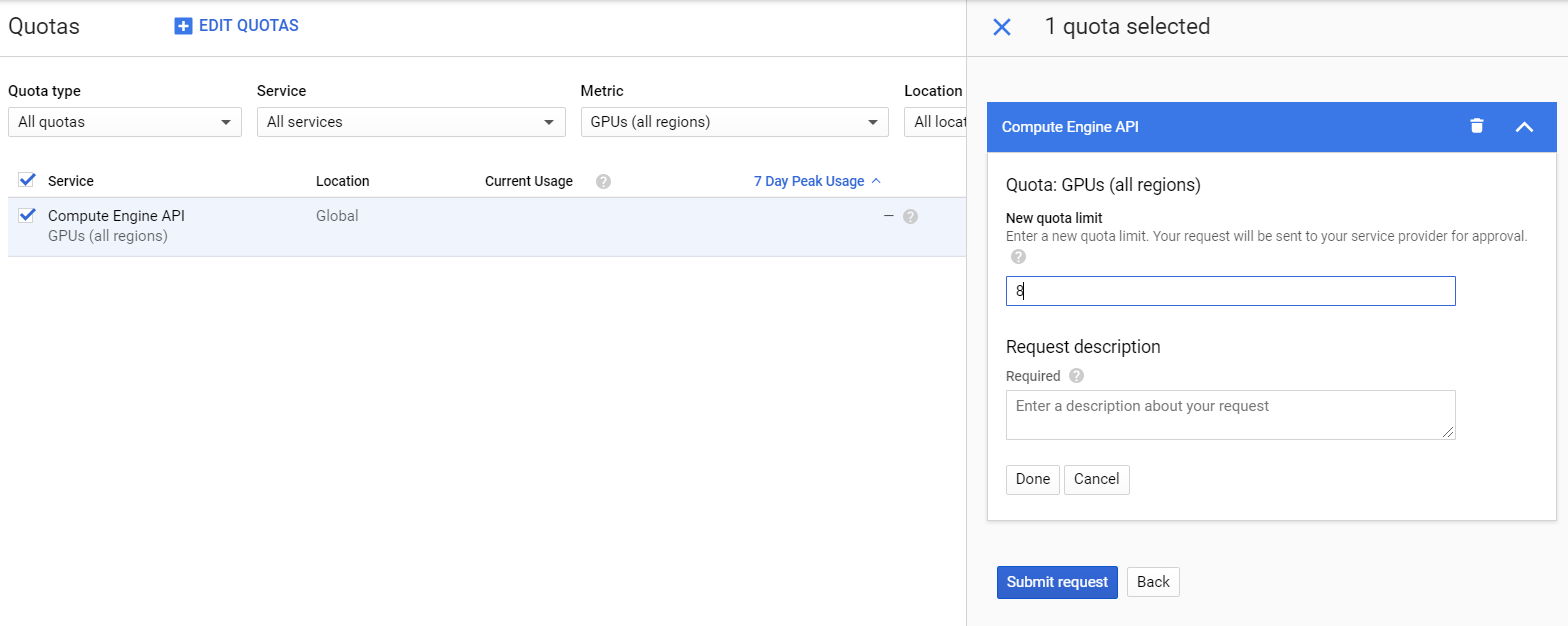

Click EDIT QUOTAS and new quota limit to 1 or more.

Click EDIT QUOTAS and new quota limit to 1 or more.

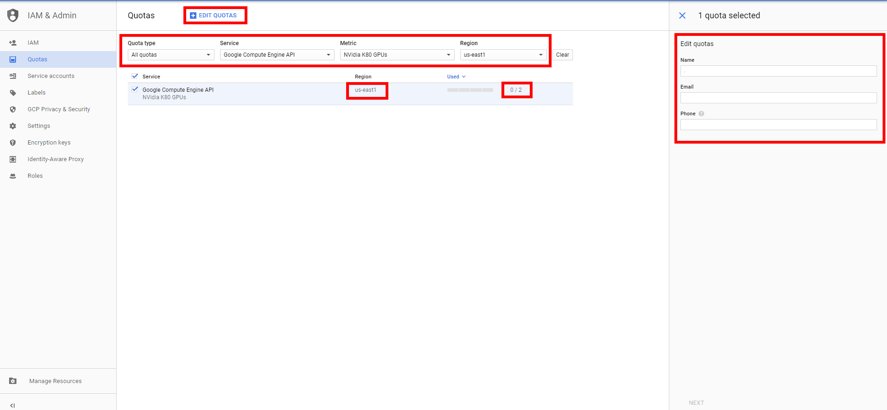

- Then you can request the increase on GPUs in each region. Locate Google Compute Engine API - NVidia K80 GPUs

(this is enough for starters, you can also request more powerful P100 GPUs,

but remember it will cost more) in us-east1 region to check your quota.

By default, your quota should be 0, select it and click 'Edit Quotas', submit a request. Wait for a moment to let Google process your request.

You should be able to receive an e-mail from google informing you of the success. Note that the quota editing request would be processed typically

in one or two business days, as is claimed by google. But the actual waiting period might vary from a few hours to 4 days or even longer depending

on the general quota demand. Typically, it would take longer for google to process the requests at the end of the semester. Please be aware of that fact and manage your time properly.

- Go to ‘IAM & Admin’ -> ‘Quotas’.

From December 2018, new users have to request for quotas when starting a project.

If you haven't upgrade your free trial ($300) account, you will see this warning.

Click on the item 'Upgrade account'. Then you can request for increasing quotas.

-

Create a new GCE virtual machine(VM) instance. We provide you with 2 options:

- To create a VM instance based on the image provided by ECBM E4040 TAs, which includes everything you need (CUDA, Minconda, Jupyter Notebook, Tensorflow, etc.), proceed to the following steps.

- If you're interested in exploring GCP and would like to configure your own VM instance from scratch, see gcp-from-scratch.

Now you can create your instance

- Go to ‘Compute Engine’ -> ‘VM instances’, click ‘create’.

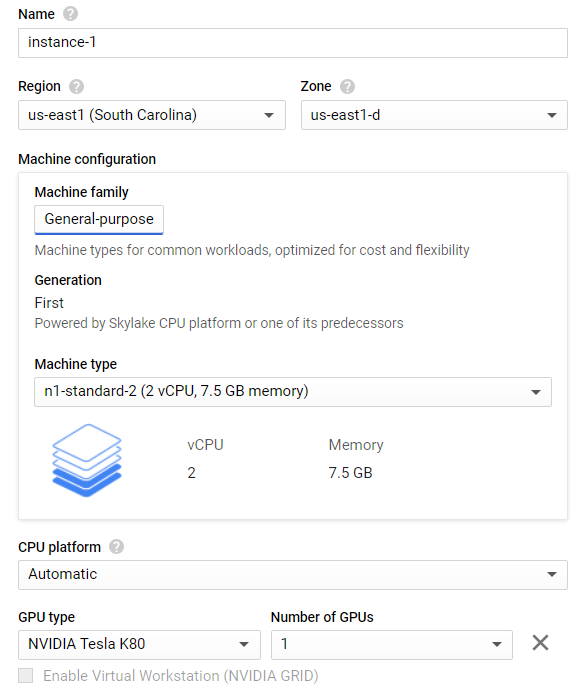

- Define your instance’s name and set zone to ‘us-east1-d’.

- Configure your instance setting, you can choose the number and type of CPUs and GPUs and memory size as you wish.

But keep the cost in mind!

Note: check GPU availability on this site, you may need to set different zone.

- Here are suggested settings (it should be enough for all assignments):

- Region: us-east1, Zone: us-east1-d

- CPU: 2, Memory: 7.5GB

- GPU: 1 NVIDIA Tesla K80

-

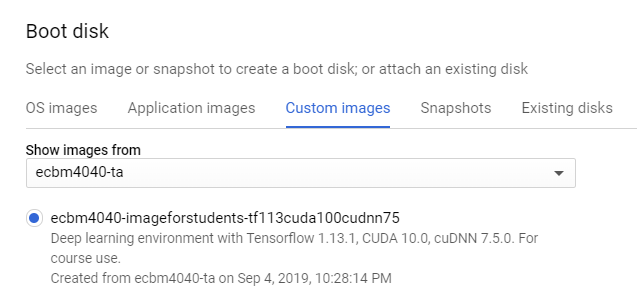

In boot disk section, select from custom image ‘ecbm4040-imageforstudents-tf113cuda100cudnn75’ under project ‘ecbm4040-ta’.

In this image, we will use tensorflow 1.13.1, CUDA 10.0 and cuDNN v7.5.

50GB of disk size should be enough for course use.

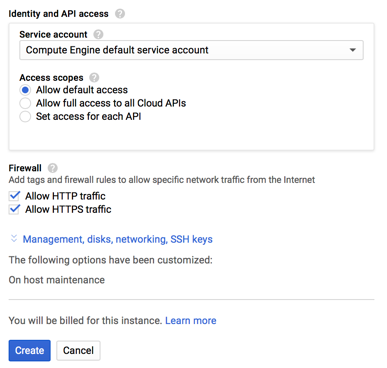

- Check ‘Allow HTTP traffic’ and ‘Allow HTTPS traffic’.

Note: you can always create another instance of more computation power for your project, it follows the same procedure.

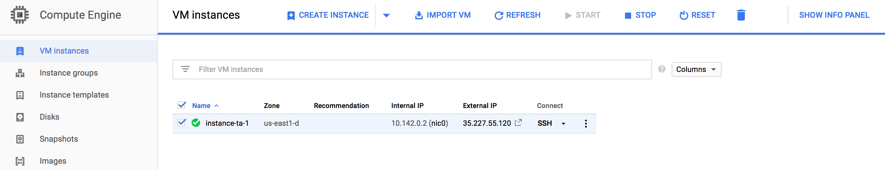

- Wait for several minutes. The newly created VM instance will be running after the creation.

Step 3: Connect to Your Instance

Before introducing how to start the instance, we want to make you remember to STOP the instance when you are not using it. You get charged only when the instance is running, so remember to stop the instance every time you finish your work. You do not have to delete the instance, just stop it.There are two methods to establish a connection to an instance:

Note: the image which we provide to you has all the tools installed under the user 'ecbm4040'. If you ssh into another user name, some of the components (such as Miniconda) will not work.-

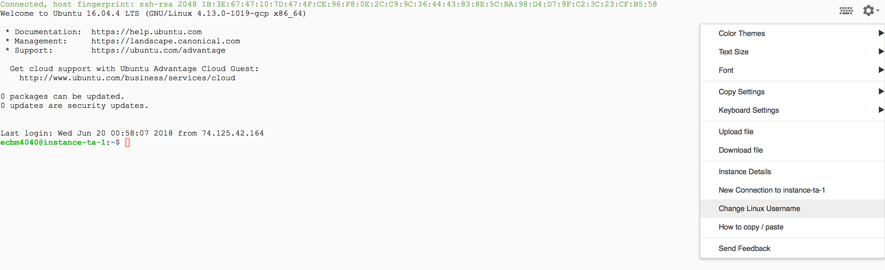

Method 1 - Connect online: (from your googleCloud/ComputeEngine/VMinstances window) Click the 'SSH' button next to your running instance. This is very useful for uploading/downloading files from a local/personal computer to the instance which is running in the cloud.

After the connection is established (a cmd window will appear), change your Linux user name into 'ecbm4040'.

-

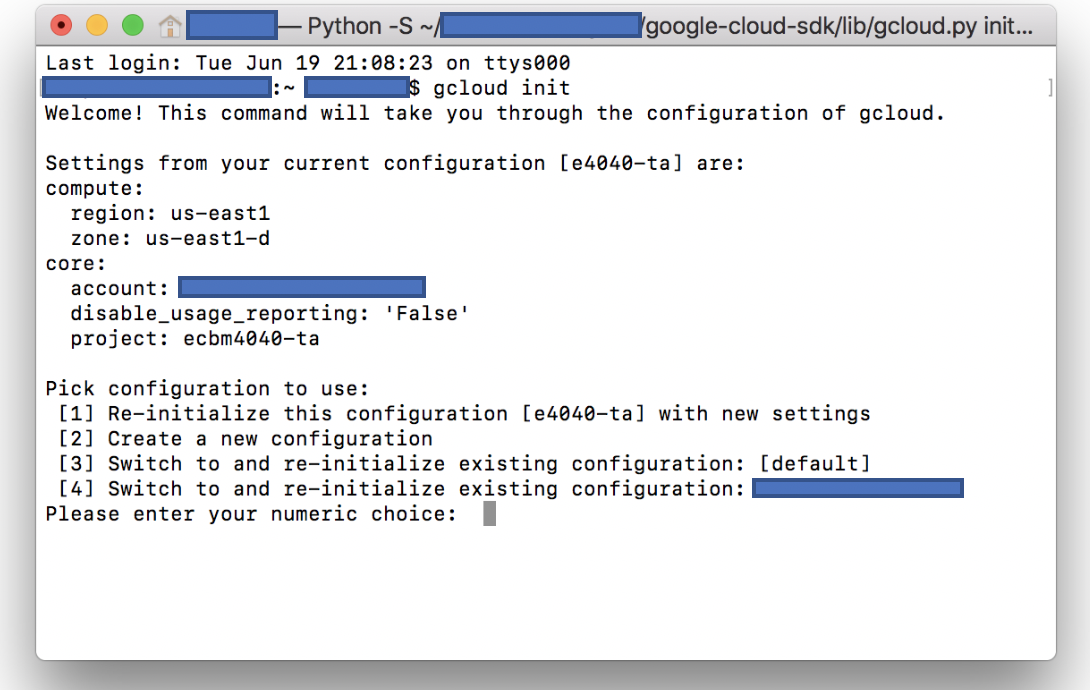

Method 2 - Connect using the Google Cloud SDK: Recommended, and will be used later for Jupyter Notebook. For this method, you need to install the Google Cloud SDK first. After the installation, the instructions can be called via command lines from the local computer. Open a console (cmd window), and initialize your Gcloud account using

gcloud initcommand. If this is the first activity after you installed the SDK, you will be directed to a website. The information such as zone or project id should conform with your previous online settings.After the initialization, type

gcloud initagain, and you should see something like the following:

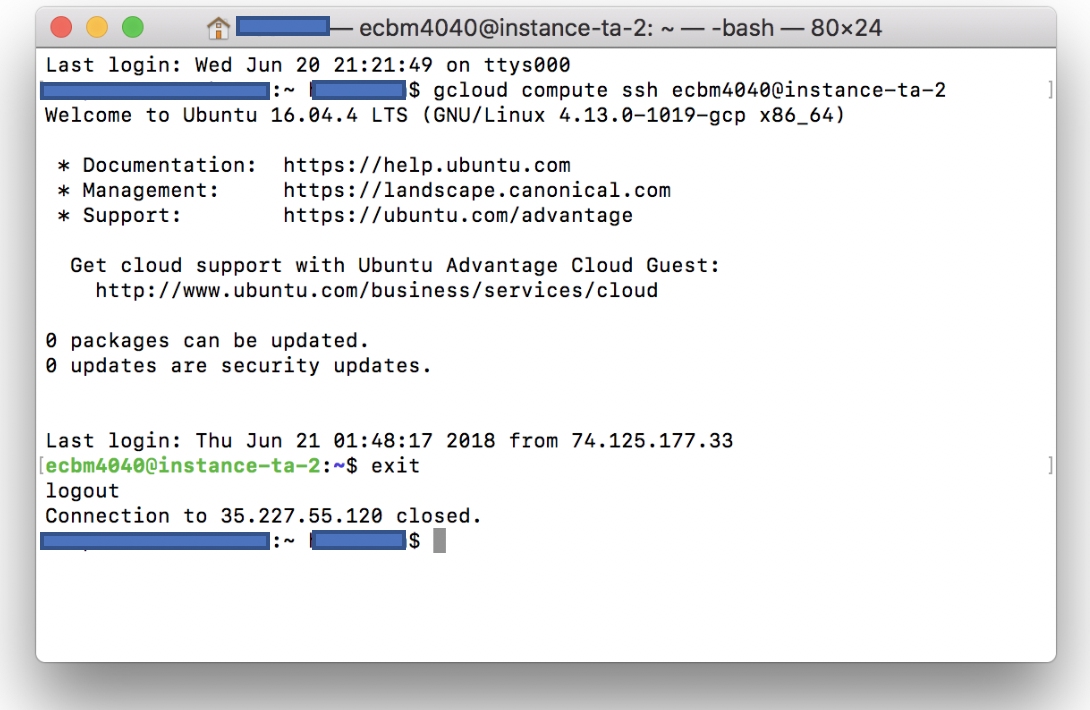

Now you can use ssh tools provided by Google Cloud SDK to connect to your instance (running in the cloud) with the following command:

gcloud compute ssh ecbm4040@your_instance_name

Later you should type

exitto close the connection.

Step 4: Check the Tools

This step will check whether the tools have been properly installed, and if they are available.

-

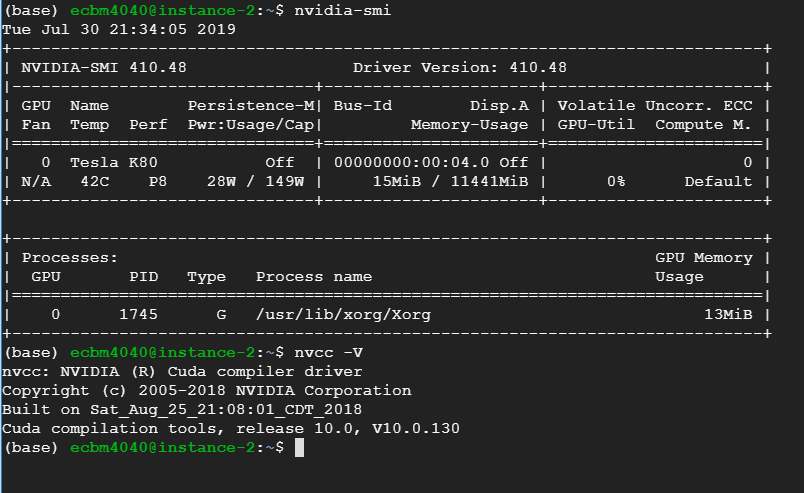

CUDA examination: First, check whether a GPU device is available:

ecbm4040@your-instance-name: $ nvidia-smiIf GPU is available, then it will show some basic info of your GPU device.

Second, verify CUDA installation.

ecbm4040@your-instance-name: $ nvcc -VIf it is correctly installed, then this command will return the information about CUDA version.

-

Miniconda is a light version of Anaconda, which helps you manage python and other software versions and environments (note that for the local computer setup, we described the installation of Anaconda 3). In our source image in the cloud, a virtual python (conda) environment 'envTF113' has been set up. You need to use the following command to activate it. Moreover, for your future assignments, it is also recommended to use the same environment.

ecbm4040@your-instance-name: $ source activate envTF113After the activation, you can review all installed packages by

(envTF113)ecbm4040@your-instance-name: $ conda list -

TensorFlow is an open-source library for deep learning provided by Google. The version of TensorFlow in the cloud instance which we provide is 1.13.1 - this is the version that needs to be used to complete the assignments for E4040 in 2019. If you want to use TensorFlow version 2.0 for some other project, you can either follow the tf 2.0 installation part on this page: gcp-from-scratch, or follow that instructions to create the instance by yourself.

To check the installation of TensorFlow 1.13, type

python, and try to run the following code:(Note: don't confuse Python prompt

>>for the Linux command prompt$).>> import tensorflow as tf >> # Creates a graph. >> a = tf.constant([1.0, 2.0, 3.0, 4.0, 5.0, 6.0], shape=[2, 3], name='a') >> b = tf.constant([1.0, 2.0, 3.0, 4.0, 5.0, 6.0], shape=[3, 2], name='b') >> c = tf.matmul(a, b) >> # Creates a session with log_device_placement set to True. >> sess = tf.Session(config=tf.ConfigProto(log_device_placement=True)) >> # Runs the instructions (for TensorFlow 1.13 sessions are required) >> print(sess.run(c))If TensorFlow is correctly installed and if it is using the GPU in the backend, you will then see something like this

MatMul: (MatMul): /job:localhost/replica:0/task:0/device:GPU:0 2019-09-05 01:58:10.257637: I tensorflow/core/common_runtime/placer.cc:1059] MatMul: (MatMul)/job:localhost/replica:0/task:0/device:GPU:0 a: (Const): /job:localhost/replica:0/task:0/device:GPU:0 2019-09-05 01:58:10.257722: I tensorflow/core/common_runtime/placer.cc:1059] a: (Const)/job:localhost/replica:0/task:0/device:GPU:0 b: (Const): /job:localhost/replica:0/task:0/device:GPU:0 2019-09-05 01:58:10.257763: I tensorflow/core/common_runtime/placer.cc:1059] b: (Const)/job:localhost/replica:0/task:0/device:GPU:0 [[22. 28.] [49. 64.]]Type

exit()to quit Python.

Step 5: Jupyter Notebook

Now, we get started on how to open your Jupyter notebook on your Google Cloud VM instance.

Jupyter has been installed in the 'envTF113' virtual environment.

-

Configure your Jupyter notebook

First, generate a new configuration file.

(envTF113)ecbm4040@your-instance-name: $ jupyter notebook --generate-configOpen this config file.

(envTF113)ecbm4040@your-instance-name: $ vi ~/.jupyter/jupyter_notebook_config.pyAdd the following lines into the file. If you are new to Linux and don't know how to use vi editor, see this quick tutorial: https://www.cs.colostate.edu/helpdocs/vi.html

c = get_config() c.NotebookApp.ip='*' c.NotebookApp.open_browser = False c.NotebookApp.port =9999 # or other port number -

Generate your jupyter login password, press Enter for no password.

(envTF113)ecbm4040@your-instance-name: $ jupyter notebook password Enter password: Verify password: [NotebookPasswordApp] Wrote hashed password to /Users/you/.jupyter/jupyter_notebook_config.json -

Open Jupyter notebook.

(envTF113)ecbm4040@your-instance-name: $ jupyter notebookNow, your Jupyter notebook server is running remotely. You need to connect your local computer to the server in order to view your Jupyter notebooks with your browser.

-

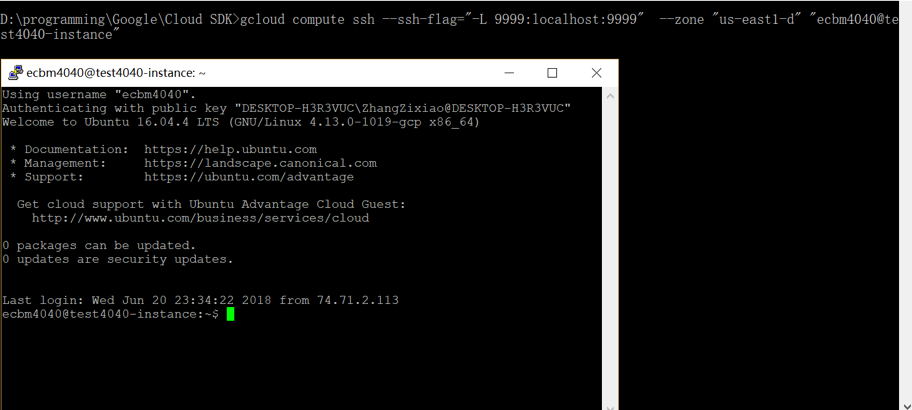

Open a console and use SSH to connect to jupyter notebook. Type in following code to set up a connection with your remote instance. Note that in “-L 9999:localhost:9999”, the first “9999” is your local port and you can set another port number if you want. The second “9999” is the remote port number and it should be the same as the port that jupyter notebook server is running.

gcloud compute ssh --ssh-flag="-L 9999:localhost:9999" --zone "us-east1-d" "ecbm4040@your-instance-name" -

Open your browser(Chrome, IE etc.)

Go to

http://localhost:9999orhttps://localhost:9999and you will be directed to your remote Jupyter server. Type in your jupyter password that you created before, and now you can enter your home directory.

-

An optional way to connect to Jupyter Notebook using SSH in GCP console without Google Cloud SDK:

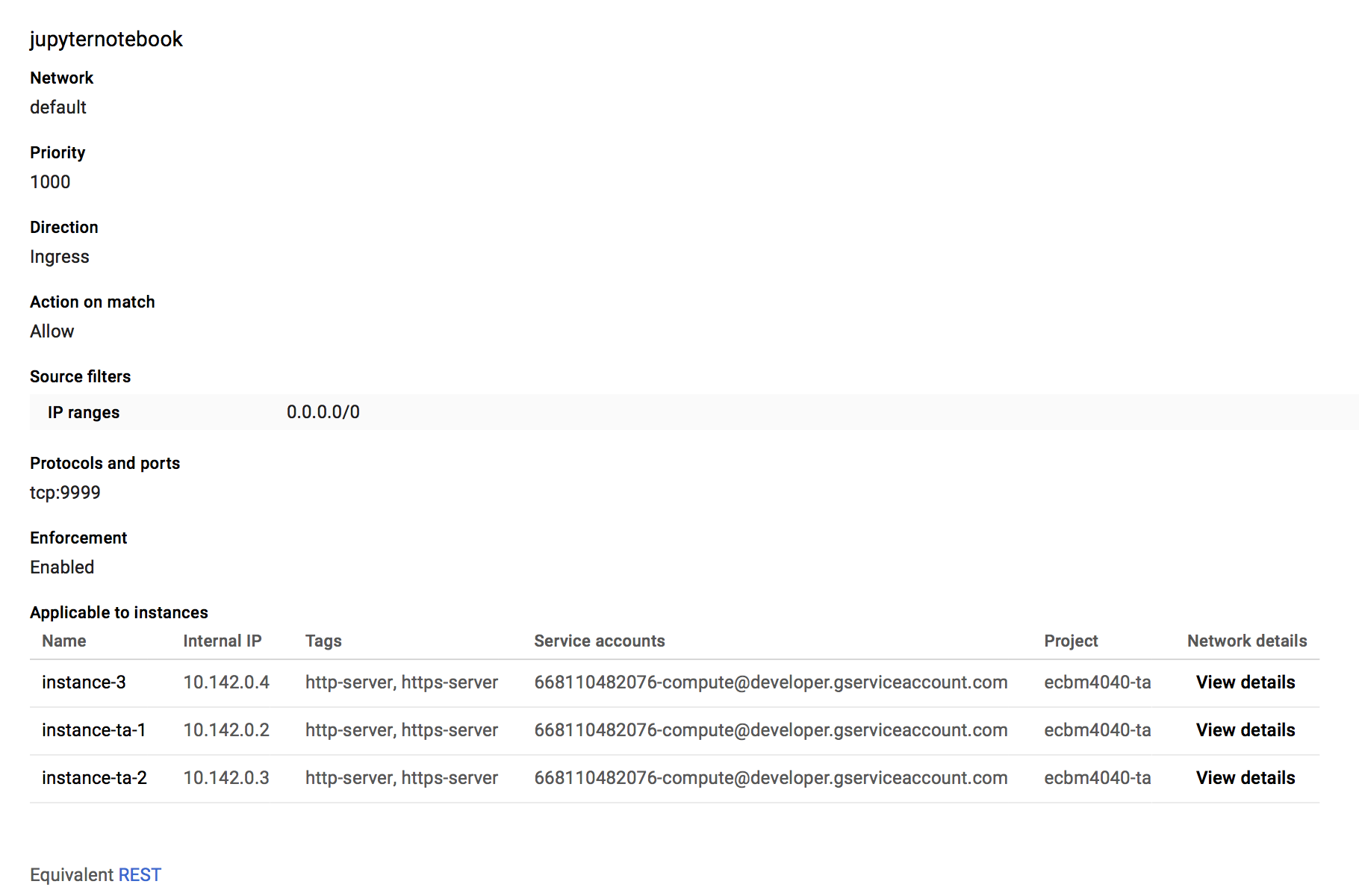

- Go to 'VPC network'->'Firewall rules', click 'CREATE FIREWALL RULE'

- Create a rule named 'jupyternotebook' like below.

- Go back to your VM instances page and copy your Extenal IP, type

http://yourExtenalIP:9999. You should be directed to jupyter notebook homepage.

Now, you have finished prerequisite of Assignment 0. Please proceed to the rest of it.

*Step 6: Other useful tools in GCP

Tmux, a screen multiplexer. It allows you to run multiple programs in multiple window panes within one terminal. That capability makes Tmux a popular tool on a remote server (such as GCP). If you want to explore more applications of Tmux, you can click here, or visit this well-written note: http://deeplearning.lipingyang.org/2017/06/28/tmux-resources/

Then we would introduce how to use it, and how helpful it is on GCP.

- First, activate your virtual envioronment.

ecbm4040@your-instance-name: $ source activate envTF113 -

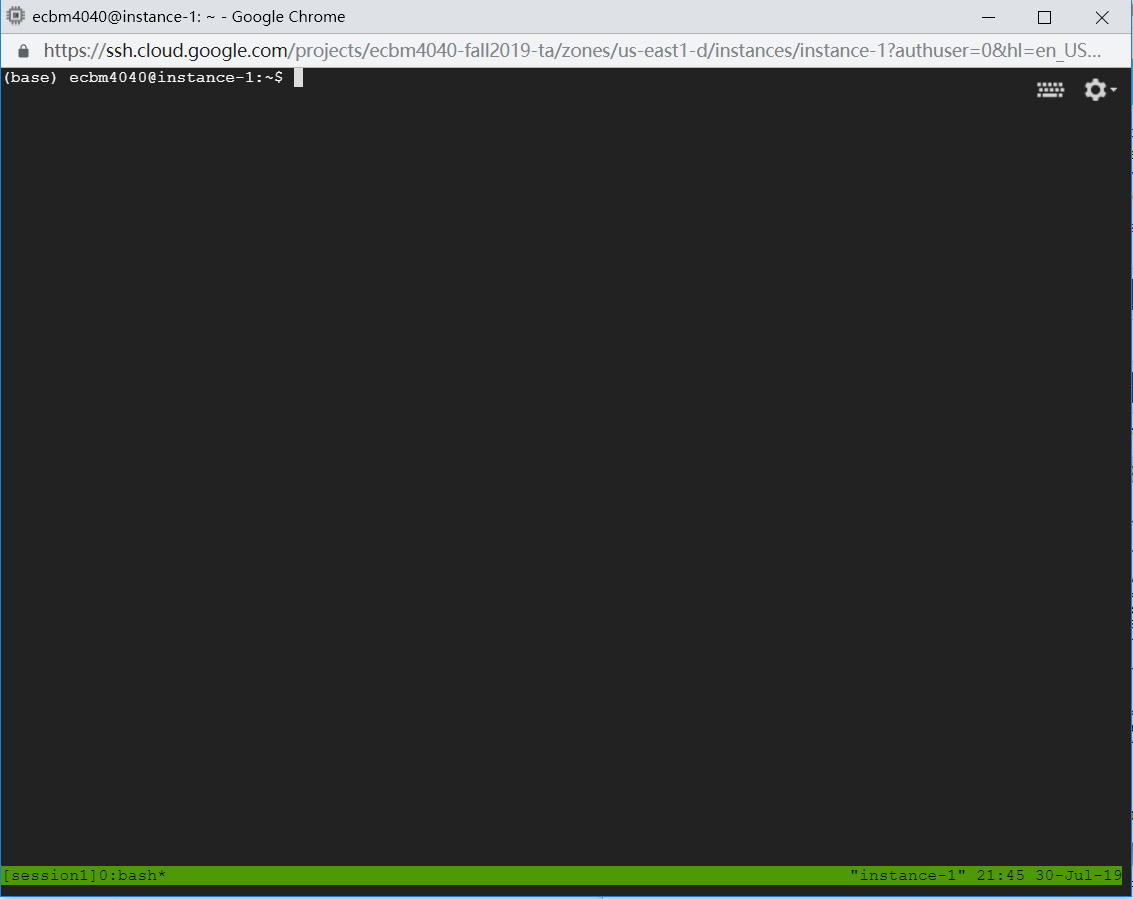

Next, create a Tmux session.

(envTF113)ecbm4040@your-instance-name: $ tmux new -s session1Then you will be in the session named 'session1'.

-

Now all the process will be done within the session. For example, you can open Jupyter notebook in the Tmux command window

just as we've introduced to you in Step 5.

ecbm4040@your-instance-name: $ jupyter notebook

For more commands of Tmux, you can refer to this link: https://www.hamvocke.com/blog/a-quick-and-easy-guide-to-tmux/

The biggest advantage of Tmux here is that it allows you run the process even though you have disconnected to your instance from local. If your network has accidentially broken, or you need to close your laptop, the process would still be running in the session, unless you kill the whole session. We highly recommend you to train any time-consuming network in a Tmux session.

- First, activate your virtual envioronment.

- runipy, run ipynb as a script. When you are running Jupyter on a remote server or on cloud resources,

there are situations where you would like the Jupyter on the remote server or cluster continue running without termination

when you shut down your laptop or desktop that you used to access the remote server. Tmux would be helpful, but you also need

to run Jupyter in a command-line environment. runipy can help with this.

-

First, install runipy in your virtual enviroment (or you have already been in a Tmux session created in 'envTF113').

(envTF113)ecbm4040@your-instance-name: $ pip install runipy -

Suppose you've opened Jupyter notebook, and used SSH to connect to it. Attach to your created Tmux session ('session1' here). Split the window panes. Switch to the new window pane, then you can use runipy to run your .ipynb file.

For more details, see http://deeplearning.lipingyang.org/2018/03/29/run-jupyter-notebook-from-terminal-with-tmux/

-

First, install runipy in your virtual enviroment (or you have already been in a Tmux session created in 'envTF113').

ECBM E4040 Neural Networks and Deep Learning, 2019.

Columbia University